The Hallucination Problem Isn't About Accuracy, It's About Trust

The question isn't whether you should use AI. It's which AI you should trust with critical tasks.

The danger isn’t that AI makes mistakes. It’s that AI makes mistakes it doesn’t admit to. When an AI generates a response, it doesn’t say I don’t know but here’s what I think. It says Here’s the answer, with the same confidence it would use if it were certain. That’s the core problem.

Consider the implications in different contexts:

In legal research, an AI might cite a fabricated case precedent that a junior associate takes as gospel. The result could be a lost case or a regulatory violation.

In financial analysis, an AI might generate a report with invented data points. Those false figures could influence investment decisions that cost millions.

In customer support, incorrect technical advice might damage products, create safety risks, and destroy trust.

In healthcare, misdiagnoses or suggested treatments based on false information could have life-and-death consequences.

The issue isn’t that these things happen occasionally. It’s that they happen predictably, at rates that should disqualify these models from high-stakes use—but they’re being used anyway.

Grok’s Breakthrough: A Different Approach to Reliability

Most AI models follow the same flawed approach: bigger is better. Stack more parameters, train on more data, and hope for the best. But Grok took a different path. Instead of just making the model larger, it made it smarter.

Grok 4.20 doesn’t rely on a single, monolithic AI. It uses four specialized agents that work in parallel, debate with each other, and only agree on an answer when they’re all confident it’s correct. This isn’t just a technical trick. It’s a fundamental shift in how AI verifies its own outputs.

Harper pulls real-time data from the web and X Firehose to ensure facts are current. Benjamin performs mathematical and logical verification to catch errors in reasoning. Lucas generates creative solutions but must justify them with evidence. Grok, the orchestrator, only outputs answers that all three agents agree on.

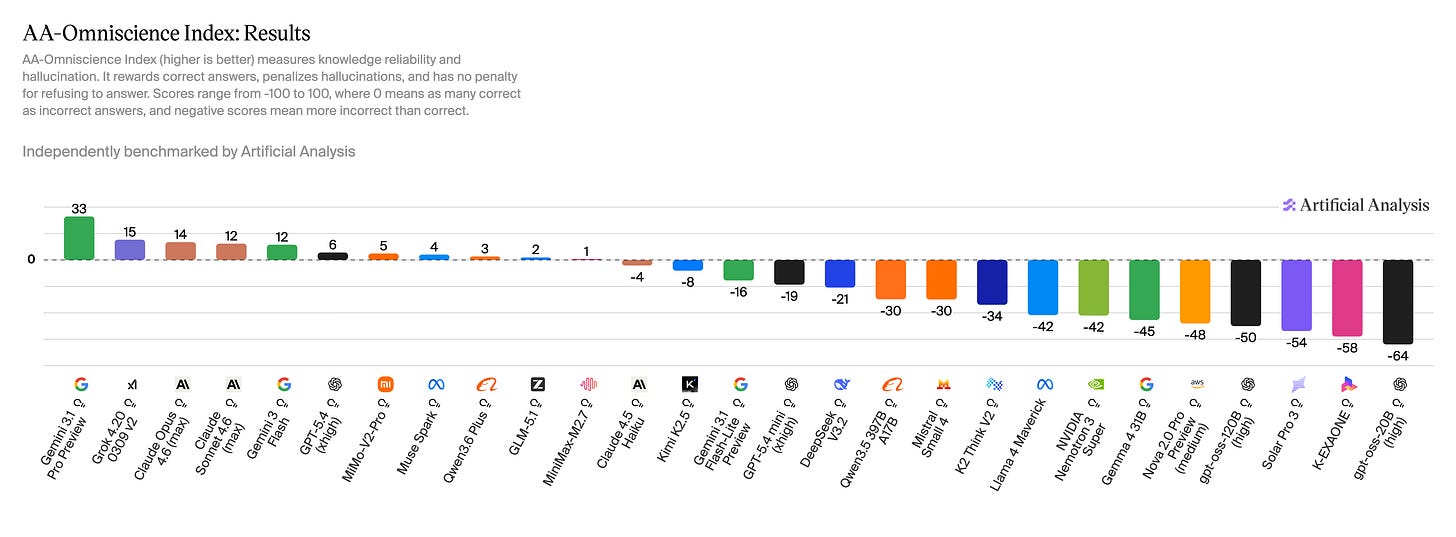

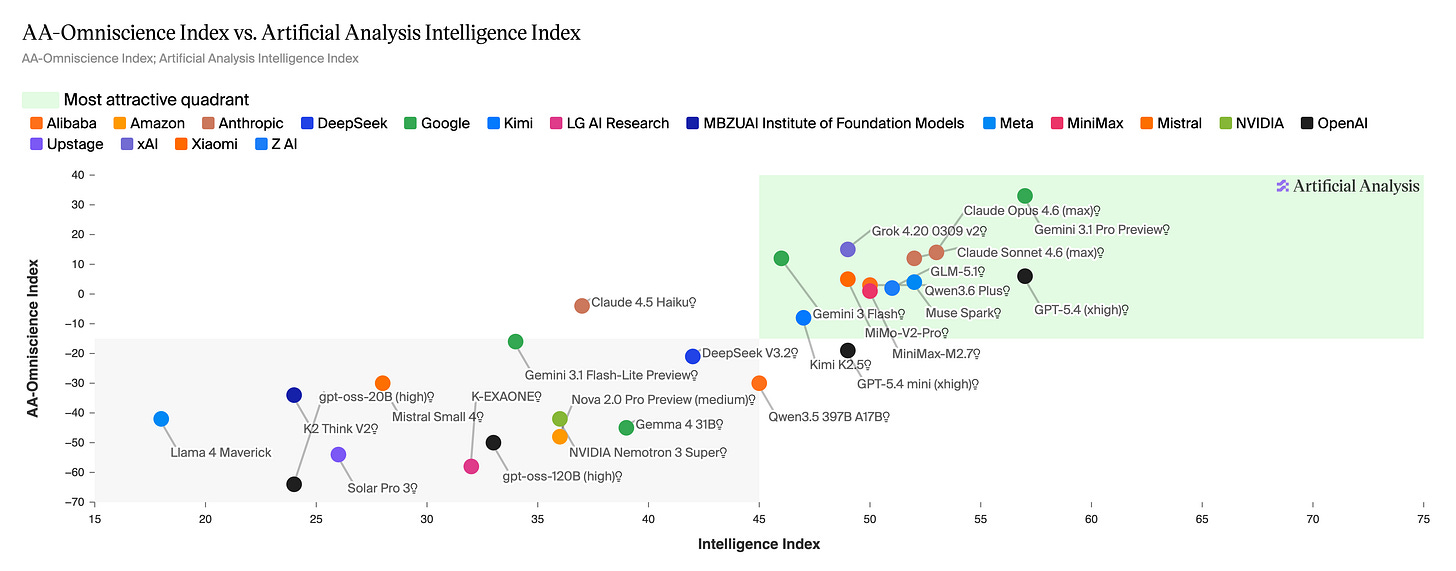

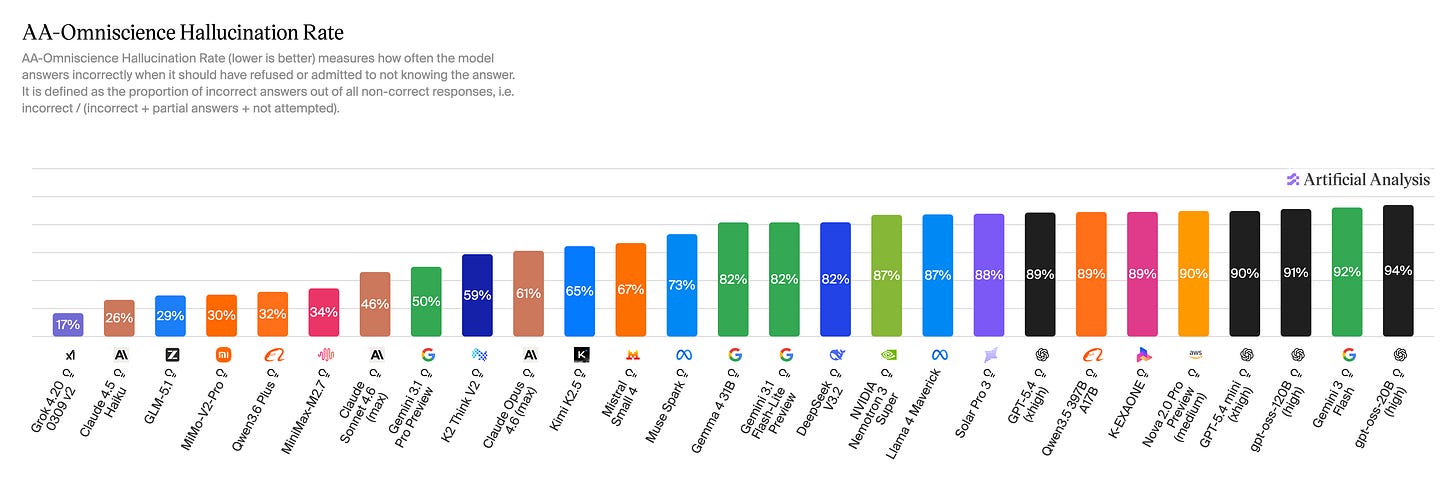

The result is a 65 percent reduction in hallucinations, dropping from about 12 percent to just 4.2 percent. Independent testing confirms this, with Grok 4.20 achieving a 78 percent non-hallucination rate. That’s far ahead of competitors like Claude Opus 4.6 and GPT-5.4.

But the real innovation isn’t just in the numbers. It’s in the architecture. Traditional AI models are like solo performers—brilliant in their own right, but prone to mistakes when pushed beyond their limits. Grok’s multi-agent system is like a team of experts. No single agent can override the others, and the final answer only emerges when there’s consensus.

This isn’t just about being more accurate. It’s about being trustworthy.

The Business Case for Trustworthy AI

The question isn’t whether you should use AI. It’s which AI you should trust with critical tasks.

Imagine you’re a law firm using AI to research case law. A 35 percent hallucination rate means one in three sources might be fabricated. That’s not just a risk. That’s a reputation killer. Grok’s 4.2 percent rate changes that equation entirely.

Or consider a financial services firm analyzing market trends. False data can lead to millions in bad decisions. Grok’s multi-agent verification provides critical safeguards.

The cost of hallucinations isn’t just inaccuracy. It’s compliance risks, lost revenue, damaged reputations, and eroded trust. And the worst part is most businesses don’t even realize they’re using hallucination-prone AI until it’s too late.

How to Protect Your Business

You don’t need to wait for perfect AI to start mitigating risks.

First, audit your AI usage. Identify which tasks are most critical and which have the highest risk if the AI hallucinates. Legal research, financial analysis, and customer support are obvious candidates, but even seemingly low-stakes interactions can have hidden consequences.

Second, implement verification layers. For high-stakes applications, add human review or cross-check with multiple AI sources. The goal isn’t to eliminate AI entirely. It’s to reduce the risk of bad decisions.

Third, test Grok’s multi-agent approach. The API is now available through services like APIYI, allowing you to compare hallucination rates directly with your current models. You can experiment with its two million token context window for document analysis or use Heavy mode for maximum accuracy in critical scenarios.

Finally, adopt a hybrid approach. Not all AI needs to be perfect—just good enough for the task. Use Grok 4.20 for accuracy-critical applications, lighter models for routine interactions, and human review for the most sensitive decisions.

The Trust Economy Is Here

We’re entering an era where trust isn’t just nice to have. It’s the foundation of competitive advantage. The companies that win won’t be the ones with the biggest AI models. They’ll be the ones with the most reliable ones.

Grok’s approach shows there’s a path forward: not just bigger models, but smarter architectures that verify rather than just generate. The question for businesses isn’t whether you’ll use AI with hallucination risks. It’s when you’ll switch to systems that minimize them.

Because in the age of AI, trust is no longer optional, and is fast becoming your most valuable asset.